Simon Willison asked on Twitter:

What are the most importantly things that people need to understand in order to effectively interact with LLM-based systems like ChatGPT or Claude?

Here are the replies. (I used text-embedding-3-small to embed and cluster them into 20 clusters and used OpenAI GPT-4o-mini to label the clusters. There are misclassifications but the themes are accurate.)

Provide Clear Context and Avoid Leading Questions

- 1. Provide relevant context but not too much

2. Models are total “yes men” – be careful not to imply your perspective if you want an objective response

3. Learn when to iterate vs start a new chat 4. Provide examples (especially for output structure) – Tweet - 1. Ask questions that the other person can understand.

2. Ask questions while predicting what the other person will respond. It’s the same as the human’s. – Tweet - 1. Ensure the system knows the relevant context. Give a detailed backstory of what you’re trying to do with it and why.

2. One thing at a time. Make the task as specific as possible and if there are multiple things that need to be done, ask it to them in their sort of natural – Tweet - The “most importantly things” are probably to ask for step-by-step before answering and to try to not ask leading questions to avoid its sycophancy bias. – Tweet

- You must provide a diverse distinct set of examples of you want it to be robust and generalize in real world systems. – Tweet

- Always ask for both strengths and weaknesses to get more balanced perspectives, and make sure the model can tell you as many facts as possible before committing itself to an answer. – Tweet

- Rule 1:Avoid chatgpt unless they release a better model than Sonnet 3.5. – Tweet

- Strongly insist that it shouldn’t passively agree with you. Encourage it to interrupt with clarifying questions that would meaningfully improve the output. – Tweet

- Avoid leading questions if you care about the answer. They are way too polite to contradict the user. – Tweet

- – It’s not Google, so use full sentences, not just keywords.

– Iterate on initial response.

– Trust, but verify. – Tweet - Just talk to them how you’d want someone to talk to you if it was you in there. – Tweet

- Provide good (and bad) examples of output, and don’t forget a few edge cases. – Tweet

- Keep hitting the ball back and forth across the net: 1. “thanks but I think these are a little too ‘salesy’ — could you try to generate some ideas that are a little more down to earth” 2. “ok, we are getting there, but still a little overheated. could you try again” – Tweet

- These are my top 10 for folks new to GenAI: 1. You have to provide all of the context the model needs to answer your question if that context is not likely to appear in the model’s weights. It will take a while to gain an intuition about what types of knowledge is likely to be – Tweet

- Suspend disbelief; collaborate not interrogate; trust no-one; have fun, role play, experiment, test; think of as a facet of intelligence built on achievements of ours, not a robo-rival. Notice book-learning over lived experience, cliches & bluffing in human world too, & do better – Tweet

- It’s a dialogue. Iterative. incremental. Chat improves with feedback. When chat creates code, for example, run the code and give chat the error messages so that it can correct the code. Before asking chat a question, ask it what it knows. Then zoom in. Gradually. 🙂 – Tweet

- One example is worth a thousand words – Tweet

- 1. How to read

2. How to write (optional) – Tweet - 1. Explain yourself clearly, using lots of examples.

2. Assume you’re talking to a smarter version of yourself that hasn’t heard about your problem yet.

3. When it doesn’t do well, use the steps above to correct it. – Tweet - 1. The more precise your question or task is, the better and more accurate the response will be. Vague prompts can result in equally vague answers.

2. Provide relevant background or context, especially for nuanced questions or tasks. – Tweet - 1. Don’t ask them to do too much in one shot, especially if they are unrelated tasks; you’ll get much worse results.

2. Don’t give too much context if you can avoid it. The huge context windows of the newest models isn’t as “free” as you might think, or rather it’s “lossy”— the – Tweet - Collaborate with them, don’t delegate to them. – Tweet

- Context Window needs to be explained well. @NickADobos is spot on, but this needs to be explained without jargon we are so used to. – Tweet

- 1. How context windows work TL;DR: it doesn’t remember everything in chat

2. It’s a text generator, that is good at patterns, and appearing smart. Not an almighty god doing cognitive work. Hallucinations aren’t ai behaving wrong. They are a feature of generating a bad pattern – Tweet - Consider the context a human would need when responding to the same request. When asked to create a presentation by your manager with 10-20 words, you have thousands or likely millions in context to inform that. Ppl often get annoyed when it fails, it’s usually not enough context – Tweet

- Understand that they are autoregressive with a context limit and the limitations that impose on the chat interface. – Tweet

Iterate and Simplify for Optimal LLM Performance

- 1. just keep trying things – LLMs keep surprising me,

2. Start simple, add more techniques, context, guidance etc. step by step – with LLMs I found, less is often more.

3. Keep a human in the loop and/or be transparent about using LLMs – otherwise prepare for unpleasant – Tweet - When your llm starts omitting code generated in prior steps of an existing chat, end the chat and replay your steps until before that happened. Take a different branch next time – Tweet

- 1. Don’t give too much information at once to process, start simple and build on top of previous ones

2. Want a contrary opinion from LLM?don’t sound like your opinion is sacrosanct – it will agree to you mostly even if its wrong.

3. Role playing and few shot examples matter. – Tweet - 1. Context

2. Difference is assumptions

3. Articulating clearly what you want (run it against another LLM to see if what you mean is what you say).

4. Being able to go back in a thread and restart (You get do over’s with LLMs that you might not get with people 🙂 ) – Tweet - Well one thing I learned is it’s best to start a new chat if the LLM is going down the wrong path, easier then forcing it back. – Tweet

- Having moderate experience with a topic / framework is important for peak quality of the response. At present, using llms for efficiency > using llms to do something you don’t know how to do. – Tweet

- at least when it comes to writing code, the task needs to be very well defined, like one would do when creating a user story for developing software. If the details are vague then you leave the LLM open to interpretation and more likely to make mistakes – Tweet

- The most important thing, and this has always been true even if not using an LLM, all good software development starts with engineering a solution first before building it. If you attempt to get the LLM to do that part you’ll create as many problems than you solve building – Tweet

- Use the LLM to explore your own understanding of the problem space and what you want to achieve. This can help improve your prompting and interpretation of the outputs. – Tweet

- LLMs…

•Pander. Don’t prime answers, ask straight.

•Only know text. Don’t ask spatial, reasoning etc.

•Hallucinate and invert. Double-check.

•Get stuck. Start over.

•Master ALL languages, jargons, styles etc.

•Are formidable documentalists. – Tweet - 1. hallucinations are still a thing, be wary when LLMs generate links and code snippets 2. data quality of training content can sometimes be dubious leading LLMs to hallucinate more often or be biased in various ways both will likely be addressed eventually – Tweet

- LLMs…

•Pick and imitate register. Talk like constructive, competent people.

•Are easily lost. Examples and feedback help.

•Can misbehave. Be harsh if needed, but stay just. – Tweet - For optimal results, provide ample context. Prompting the LLM with ‘Feel free to ask clarifying questions’ and doing the due-diligence to answering the questions often yields much better results. – Tweet

- The more explicit you are the better the output. The LLM can not read your mind and there is a lot of ambiguity when interpreting language. – Tweet

- One issue I am seeing more of – Often i ask a question on a choice it made. The LLM assumes I don’t like it or it’s wrong – it then starts to apologize and course correct. More and more I add something like “not refuting or arguing, just trying to understand” etc. – and that – Tweet

- They are inherently unreliable in more than one sense, which accumulates the more requests you run in a chain. The Six Sigma approach is devastating to LLMs. – Tweet

- Treat it like a very intelligent junior employee who just started at your company and lacks context. Give the LLM the same level of detail for every instruction you would give to this junior employee. – Tweet

- That LLMs are not too be trusted as they reliably fail at information due to multiple effects, including hallucinations. That LLMs don’t actually understand things and don’t have common sense. It is mandatory to adapt expectations and ways of working to successfully use them. – Tweet

Craft Effective Prompts for Consistent Results

- How to prompt – Tweet

- Carefully consider keywords, and prioritise them via the locating them earlier and at the end of longer prompts. – Tweet

- If you want stable results across models and are looking to build robust pipelines you should stop hand writing prompts and move toward prompt optimizers. https://ycombinator.com/launches/L4V-hamming-let-ai-optimize-your-prompts-free-for-7-days… Also built into DSPy! – Tweet

- While crafting logics and system prompts, Always keep a thought in your mind parallel what would I respond to this prompt and context. – Tweet

- 1. Prompts matter.

2. Treat it like a tool, and you’ll get a tool. It’s only as smart as you let it be. – Tweet - to ask them the best way to prompt them – Tweet

- there is a single prompt that gets the job done, thousands that screws it – Tweet

- 1. Always add a system prompt at the beginning: Define a role. Ex: “You are a senior software developer who excels in…”

2. Context Matters: Provide a detailed background for better insights.

3. Clear Prompts: Specificity is crucial for accurate outputs. – Tweet - If a large global prompt doesn’t work, try step by step. If it does work, but has errors in response – Ask it to fix errors one by one. Insist, like you would with a human supplier. If “do this” doesn’t work, try “Strictly do this”. Amazing how effective insisting is 🙂 – Tweet

- The better the prompt the better the output. You don’t need a Meta framework for 90% of things – Tweet

- They don’t exist between prompts – Tweet

- Don’t rely on the models weights alone. Be explicit in the prompt and give it pointers to what you’re expecting. Let it “clean up” or “translate” your prompt rather than “come up” with an answer based on its training. Exception: generating lists for inspiration. – Tweet

- prompt engineering, in order to get the most desired outcome in handy. – Tweet

- It lies Q: Who was the second person to walk on the moon? A: Pete Conrad Q: can you name the crew members of Apollo 11? A: I got the right answer. Q: Then how come Pete Conrad was the second person to walk on the moon? A: My apologies. Indeed Buzz Aldrin was the second pe… – Tweet

- How to say no. – Tweet

- How to use smart phone or computer with internet – Tweet

- Vibe is an input. – Tweet

Don’t Expect Human-Like Understanding from LLMs

- LLMs have no “thoughts” or understanding, they’ll simply write the statistically most probable answer based on your input and have been prompted to act as assistants. – Tweet

- LLMs are incredibly random. Responses can change wildly based on a single character difference in the prompt. Even one extra space. They are best for prompts that have a range of possible responses, not for prompts where you expect one consistent answer. – Tweet

- Cease prompting their LLM to give them a viral tweet with forced irony forcing awareness to an issue. That’s my own personal opinion, bro. But, believe what you want. – Tweet

- If you don’t know what you want, the LLMs too likely won’t know. And if they don’t know they will make it up. And if you don’t know, you will not know that they made it up. – Tweet

- Be sure not to put contradictions in your prompt. LLMs, in contrast to humans, try to follow instructions as close as possible. They usually handle contradictions by ignoring some part of the instructions or even ignoring facts. – Tweet

- It’s biased toward its creators. So if the majority of companies that are developing LLMs are owned by the same investors, then in fact, we are having a single LLM that is biased toward that investors goals. E.g., chatgpt is more toward liberalism and refuses to operate otherwise – Tweet

- Basically, you need to understand that LLMs are not humans. You can’t assume they’ll understand what you mean when you write short prompts. You get the best out of LLMs when you provide detailed instructions of what you want without letting laziness get in the way. In my – Tweet

- Don’t assume anything. LLM doesn’t learn like a human. Any assumption you make about what LLM should or shouldn’t know is probably wrong. – Tweet

- Describe your context and the role you want the LLM to look at your input (critical, tech/none-tech, …) Think what you could expect from a wise, random person you ask on the street. Do not expect more from the LLM-Answer. Also only trust it similarly. – Tweet

- Give it an option to not do something either by allowing the LLM to reply with something like “I don’t know” or tell it to ask follow up questions. – Tweet

- There is nothing fundamentally important for that interaction. These LLMs are just minimum viable versions of something much bigger that will come soon. That something will know how to interact with us no matter how we behave. – Tweet

- 1. that you need to cram the relevant data into the prompt. LLMs are far far better at transforming what you give them than they are at answering solely on the basis of the lossy representation of the training data encoded into the model itself – Tweet

- The side effect fact that formulating a question for an LLM makes you think better. When coding, for example, we often run questions in our heads and then get to coding. Being forced to formulate a question properly may lead you to trajectories you may have never considered. – Tweet

Treat LLMs as Guided Children, Not Mind Readers

- The game isn’t to ‘one shot it’. It’s to get something you never thought was possible or that you’d never think of. I always say they are like children, they need guidance (back story and reason) and repetition …but room and time to play and grow. – Tweet

- Honestly, flexibility and patience. We need to give up a little bit of control and expectation of all things to be so rigid. – Tweet

- When working with it, you need to expect it to not read your mind, but work with it as if you’re asking for help from an insanely gifted child and give yourself patience to shape the result. – Tweet

- if it makes life better? yes. but always? no. – Tweet

- When asking it how to implement something, always give it options. If you can’t think of options, give it a vague out. Instead of asking, “should I do this to my code?”, ask it “should I do this to my code, or is there some better way I could do it?”. Otherwise the models are too – Tweet

- 1. always consider that it doesn’t know what assumption you’re making. so it might infer them sometimes but often it’s much better to over explain what you want.

- 2. they will often run ahead on a suggestion you have even if it’s not the best path so I find myself adding “if this – Tweet

- It cannot read your mind, if you don’t explain exactly what you want you will not get what you want – Tweet

- I am not ready to give advice based on a bet that “something much bigger will come soon” – prompting advice that worked for GPT-4 over a year ago is still mostly relevant to working with the best models today – Tweet

- to be concise and always assume the response is wrong, even ever so slightly. Check and correct. – Tweet

- – you have to provide context otherwise it assumes – it will often agree with you or apologize/correct itself even if you question the right answer – Tweet

- The limited ability for non-linear (or non left-to-right) reasoning. Encouraging the model to spend more time planning and discussing beforehand often leads to better results. This may be less the case with Claude etc where reasoning tokens are happening behind the scenes. – Tweet

Context is Key for Effective Interaction

- Context is everything – Tweet

- Context is all you need. – Tweet

- Understanding how context works – Tweet

- It’s all about context – Tweet

- #contextmaxxing – Tweet

- Context, Task & Purpose – Tweet

- Subjectivity. Context. Brain rent. – Tweet

- I’d say understanding the concepts of context, attention, and likelihood – Tweet

- 1. Context and memory (the degree to which you can refer to previous parts in the chain of context) 2. Temperature and hallucinations. The tradeoff between extremes of temperature 3. It’s wise to have benchmark questions of your own to test when a new company/model comes out – Tweet

- local maxima sensing – Tweet

Acknowledge the Stateless Nature of LLMs

- you’re interaction is with a stateless inference that exists for a fleeting moment, current ai is not continuous which is easy to forget. This has implications for what you are building for: – Tweet

- that they’re stupid next-token predictors and not intelligent agents. If you expect conscious beings, you’ll be surprised and disappointed. But they’re incredibly good at predicting the next useful token. – Tweet

- That standard intuitions for computers don’t apply. Treat it the way you would treat a knowledgeable but fallible friend. Not like a purely logical SciFi AI with perfect memory. – Tweet

- Normally I hate predictions and terms like this, but the next 20 years are going to be the era of “embodied intelligence” People are imagining humanoid robots, this will be a very small fraction of it. Compared to the software problem, the body is trivial. Imagine asking your – Tweet

- Inherent lack of memory about previous interactions. Every message is starting from zero and only seems coherent because background info and the previous messages and responses are sent before the latest message. – Tweet

- They’re not sentient. They generate responses by predicting patterns from vast data, which means they’re as fallible as they are impressive. The key is precision: your queries must be meticulously clear and well-contextualized. – Tweet

- it doesn’t have a memory like hooomans – Tweet

- Whenever the conversation derails, you need to cut that branch and keep the model in the “right universe of probabilities” by editing prompt/messages. This is also why I was skeptical about Reflection, because if it really worked, it would be breakthrough. – Tweet

- They aren’t deterministic – Tweet

Leverage AI for Prompt Suggestions and Refinement

- I like to ask them for prompts to use for a given purpose, it tends to be more detailed than I would be. Can also use this to add example Q&A if need be. – Tweet

- We need AI assistance with prompts and suggestions on rewriting your queries similarly to Grammarly’s for spellchecking and correctness. – Tweet

- “Give me a list of questions I can answer to help improve the quality of the response” – Tweet

- Let’s ask one. – Tweet

- Can we get our hands on all the prompts used in fine tuning data or at least major ones. Highly unlikely they will release it. – Tweet

- anybody got tips for image generation? i hardly ever use the image features, but lordy, they struggle! even w with clear, verbose prompts using art school vocabulary, specific artist citations, and example attachments, lots of iterations, etc. – Tweet

- I’m doing a podcast with the Cursor team. If you have questions / feature requests to discuss (including super-technical topics) let me know! For those not familiar, Cursor is a code editor based on VSCode that adds a lot of powerful features for AI-assisted coding. I’ve been – Tweet

- They’re useful in the same way Google or the internet or stack overflow is useful plus one big advantage: your question doesn’t have to take your specific situation and change it to a generic case that someone else has already answered, you can just ask about your exact case! – Tweet

Start with a Jailbreak for Objective Analysis

- Using a jailbreak should always be your first step if you want less biased, more objective and fact-based analysis of sensitive or controversial sociopolitical issues. – Tweet

- Kinda like Google, small changes in wording can give you quite different results. – Tweet

- That you should only use it to get answers you can verify with a separate tool, or somehow evaluate yourself (ej. text quality). – Tweet

- Its not a tool – Tweet

- dont treat it like a search engine. think about the outcome and output you are trying to achieve. – Tweet

- There is a considerable chance to answer is wrong, so likely everything needs to be double checked. – Tweet

- I can only speak for the use cases I’ve come across wrt legal work, but don’t use them for tasks where you need a reference. Using them to draft or review documents is fine. Asking for a case law reference is a no-no. And of course, make sure you’re not leaking confidential stuff – Tweet

Master Prompt Engineering for Better Outputs

- lol. Nice try. If your business needs to level up I can do certification class. Your employees will get Level 4 Prompt Engineering Classification. DM if interested – Tweet

- I like to write no full sentences with error and llm understand. So prompt engineering bullshit – Tweet

- Turing test. – Tweet

- Use instructions to change the style of the output that the LLM produces. For Claude you have to make a project first in order to be able to set the instructions. – Tweet

- – Understanding how LLM system, ChatGPT or Claude works and responding technically in basic. – Prompting skills. Understanding the difference between effective and ineffective prompting. – Tweet

- understand the english language and HOW it’s used (sadly, even english speakers have a hard time w/ correct language use). know grammar and syntax, context and nuance. be clear, succinct, specific when creating prompt. edit, edit, edit before sending prompt. – Tweet

Understand LLMs as Statistical Predictors

- Language models cannot generalize the simple formula “A is B” to “B is A.” – Tweet

- 1) tokenizers/decoding strategies are both incredibly important and invisible to most users. Remember that what you input is not what the model sees exactly, and what you read is not what the model output directly. 2) repeat #1 for the crowd in the back – Tweet

- It’s a bit sad and confusing that LLMs (“Large Language Models”) have little to do with language; It’s just historical. They are highly general purpose technology for statistical modeling of token streams. A better name would be Autoregressive Transformers or something. They – Tweet

- Language – Tweet

- They are next word predictors. Everything is downstream from that. – Tweet

- The output is encoded in the input, the model is just a statistical decompression engine. This means that they can only ever amplify your mind, they can’t think for you, however they can translate your question into more formal language & that may decompress into something useful – Tweet

Stay Focused on High-Impact Tasks

- Try to stay in the high impact zone e.g. through breaking tasks up and don’t expect perfect results at all times – Tweet

- Being able to define goals and objectives. – Tweet

- Focus loquaciousness to refine results that will otherwise always regress to mean averages. – Tweet

- If it doesn’t understand you, ask it to help clarify your question. If you’re not getting the answer you need, break your question into smaller parts. If you don’t know how to break it down, ask it to help you break it down. – Tweet

- • You’re interacting with a superposition of all humanity, so defining a specific persona that would be helpful for your task produces better results. •Avoiding assumptions and explaining your goal in the clearest way possible is the key to avoiding running around in circles. – Tweet

Understand LLMs as Probabilistic Text Generators

- they are reality-adjacent – Tweet

- that they have to make sense – Tweet

- That they are probabilistic systems. – Tweet

- That they’re random text generators and any appearance of intelligence is accidental and illusory. – Tweet

- themselves – Tweet

Verify Information, Never Trust Blindly

- Verify, never trust. – Tweet

- Never trust them – Tweet

- Just don’t. – Tweet

- Anything coming out of those things can be completely false. Don’t just accept it as truth. – Tweet

Engage Actively to Maximize LLM Utility

- that it’s only as useful as how many questions you’re asking it. Any initial understanding beyond that would be an overkill in my opinion – Tweet

- It is only an upscaler not a freewin. The more you know the better it works, but compared to a person you can talk with it in shortcuts. The skill is to always reposition it constantly, before it goes off in the wrong direction. You can also work with labels within it’s answers – Tweet

- They’re useful/powerful for a wide range of tasks. Their usefulness is highly variable, depending on context & the skill of the user. A user’s existing expertise can be greatly amplified by the system, but novices probably benefit most. Ask them for help on how to use them. – Tweet

- You no longer need to learn regex etc, you can just act like you know it at an expert level now, similar with syntax of virtually any language or technology. It is better at writing debugging output for you to find the problem in the code than finding the problem in the code… – Tweet

Communicate Clearly and Specifically

- Be specific, clear, and thorough. Same as communicating with humans, but more important. – Tweet

- Be super clear with instructions. Funnily enough, we should be doing that with our instructions to our fellow humans, but we don’t! – Tweet

- Effective writing – Tweet

- BE SPECIFIC. Every one of my customers asks why a query they make doesn’t return a result at all or a result they desire and it is because of the quality of their query over and over again. Some customers understand this out of the gate, some need some training. – Tweet

Be Knowledgeable to Identify Hallucinations

- Britannica’s Great Books of the Western World – Tweet

- Hallucinations are a thing and the model doesn’t know if it’s hallicunating or not. That’s why the user using an LLM on any field has to be knowledgeable on that field in order to determine what’s a hallucination. This means you can’t use a LLM reliably to do something you can’t. – Tweet

- LLMs don’t have the notion of True or False – Tweet

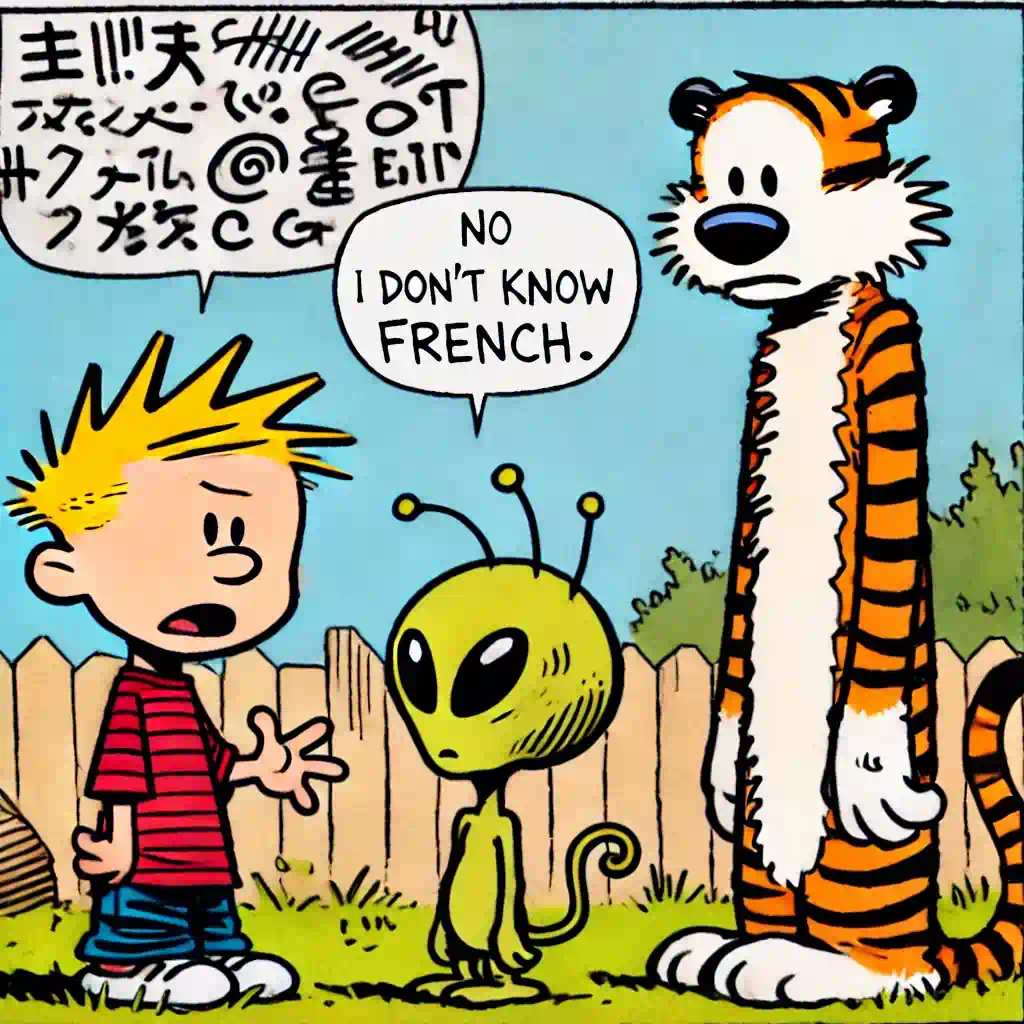

Models are total “yes men”…LoL!

Using Richard Seroter’s line – “Treat AI assistants as a slightly-drunk knowledgeable friend”, I brainstormed with my bots to create this cartoon -https://mvark.blogspot.com/2023/07/beware-slightly-drunk-wisdom-of-ai.html